Post 1 introduced the system: an ontology that captures your business, four reasoners that compose on it, grounded in your data cloud. This post is about what we're shipping today so you can use all of it.

We've released skills that help agents write and execute PyRel code directly against your ontology using coding agents like Cortex Code, Claude Code, and Codex – plus templates with runnable examples to get you started.

PyRel is RelationalAI’s Python-native language for building, querying, and reasoning over ontologies. With the skills installed, the agent generates predictions, configures graph algorithms, defines rules, and formulates optimization problems – all expressed in the ontology, so you can focus on solving business problems.

AI works in both directions: agents make reasoning accessible to non-specialists, and the ontology grounds what the agent produces. Using agents, data teams reach all four reasoning types without specialist expertise; every answer traces back to the logic that produced it. Meanwhile, LLMs are great for synthesis and translation, but for decisions that hinge on hard constraints and data lineage, an ontology keeps them honest.

The agent doesn't do the reasoning – RAI does. The agent carries specialist methodology: OR patterns for formulating optimization problems, graph theory for algorithm selection, data science for model configuration. It generates PyRel (RelationalAI's Python-native language) under the hood. The analyst stays in business language.

It works both ways. Agents make RAI accessible – data teams use reasoners without specialist expertise. RAI makes agent outputs reliable – ontology grounding ensures answers are defensible and traceable, not just plausible. LLMs are good at compressing information and generating new content – but for decisions grounded in systems with hard physical constraints, they're liable to color in the details. The ontology keeps them honest.

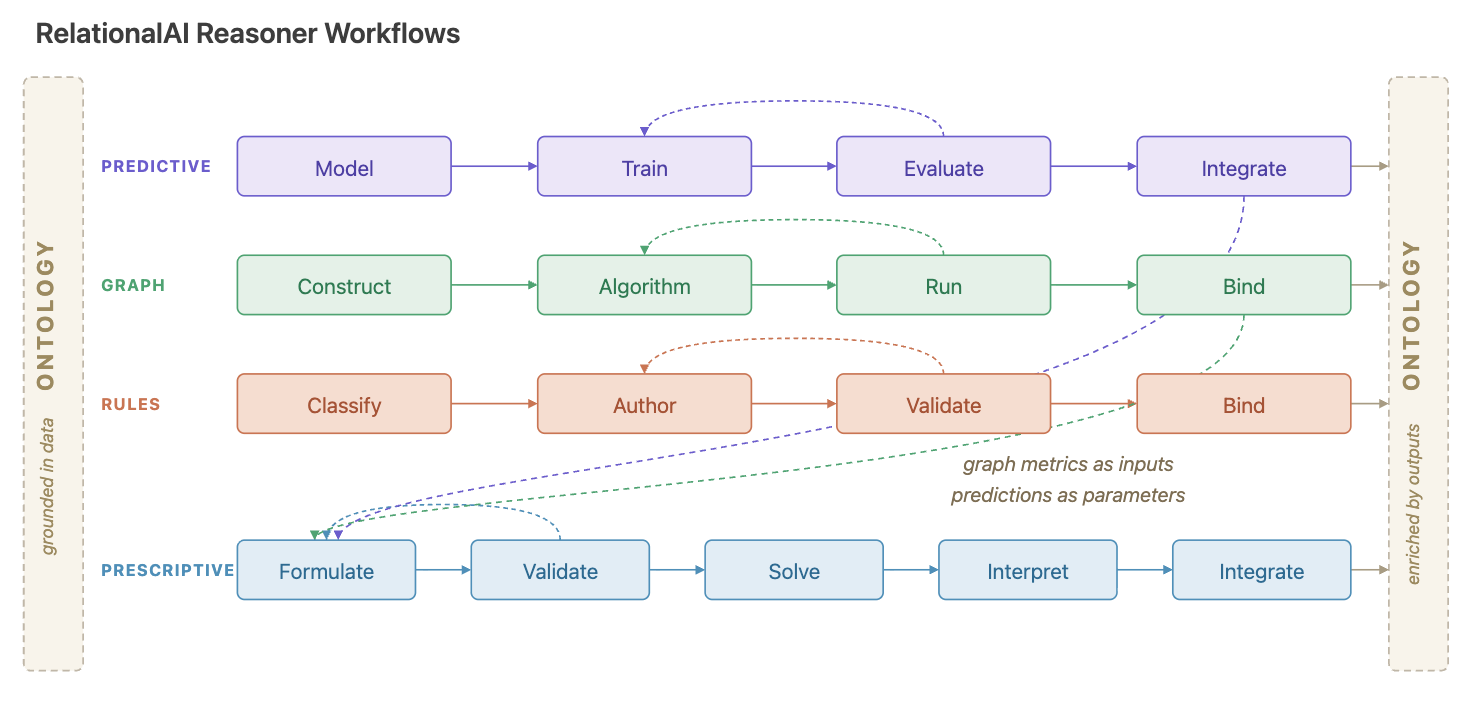

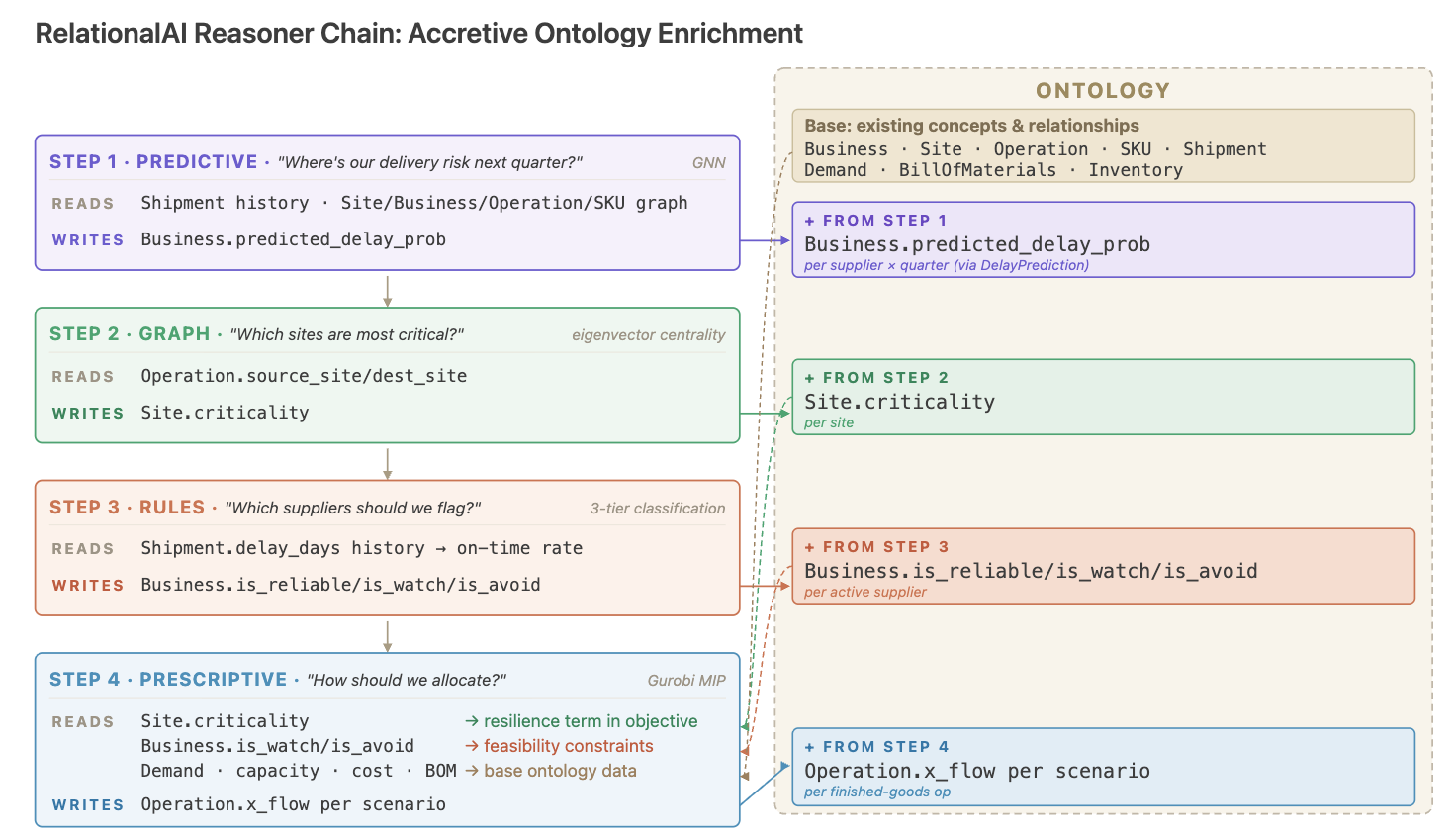

Every workflow reads from the ontology and writes its results back as typed properties on shared concepts. Each reasoning type – predictive, graph, rules, prescriptive – also requires expertise many data teams don't have: data science for model training, graph theory for algorithm selection, logic programming for rule authoring, and operations research for optimization.

Take optimization. The translation path from business question to mathematical formulation to solver to plan is where most attempts stall. The model lives in a spreadsheet or notebook, data assumptions are implicit, and constraints reflect last quarter's reality. Six weeks later, costs have shifted, a warehouse has gone offline, and the formulation is stale.

For an analyst – someone who knows the data and the business questions but isn't going to stand up a reasoning pipeline from scratch – this is where things break down. Coding agents equipped with RelationalAI skills close that gap.

"We were looking for a way to put preventative maintenance optimization in the hands of the people who actually run the plants. The agent skills get a planner from a question to a solved problem in hours, not sprints — and they can pressure-test the result themselves."

— Fouad Toumert, Plant Manager, Obeikan

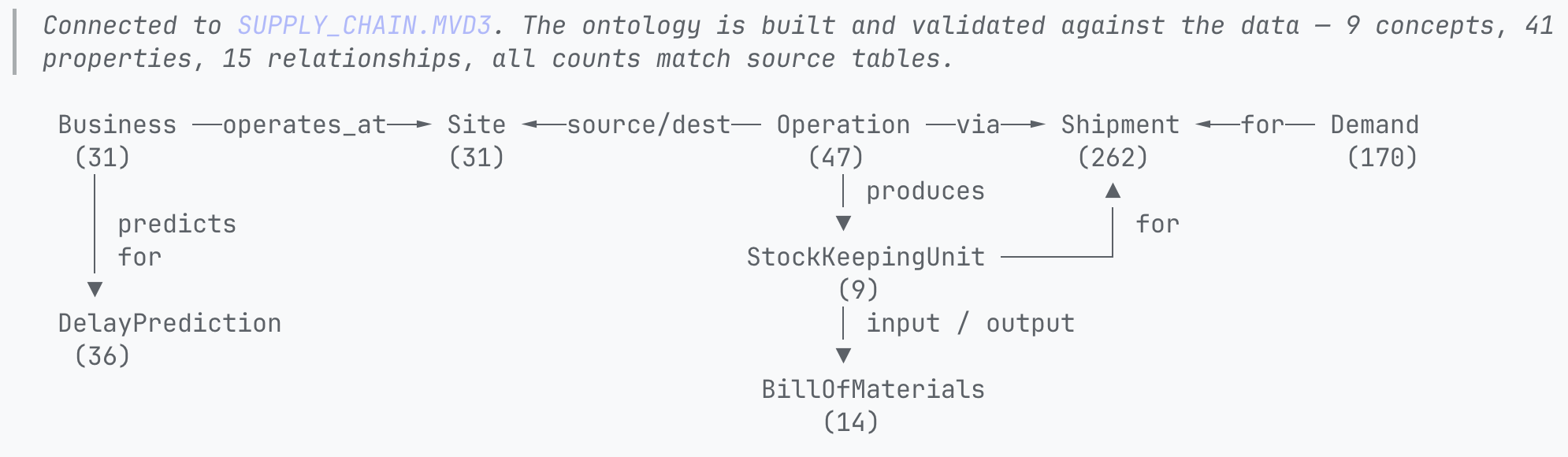

Back to the allocation problem from the last post. The supply chain network ontology is connected to the data cloud and already describes the business with concepts like sites, operations, suppliers, and shipments. The steps below show how the analyst layers reasoning on top of it using the RelationalAI skills.

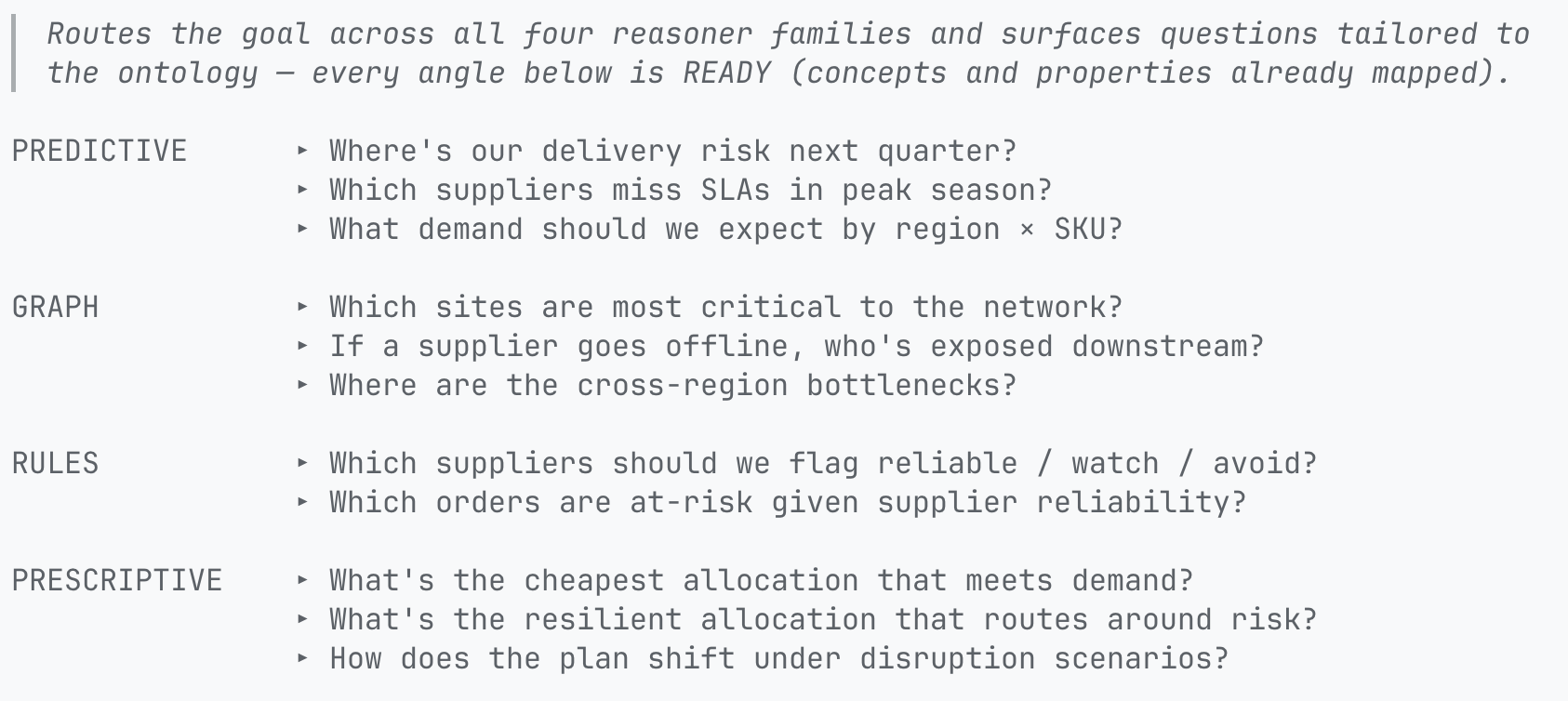

The agent surfaces questions that help the analyst solve the allocation problem using the ontology.

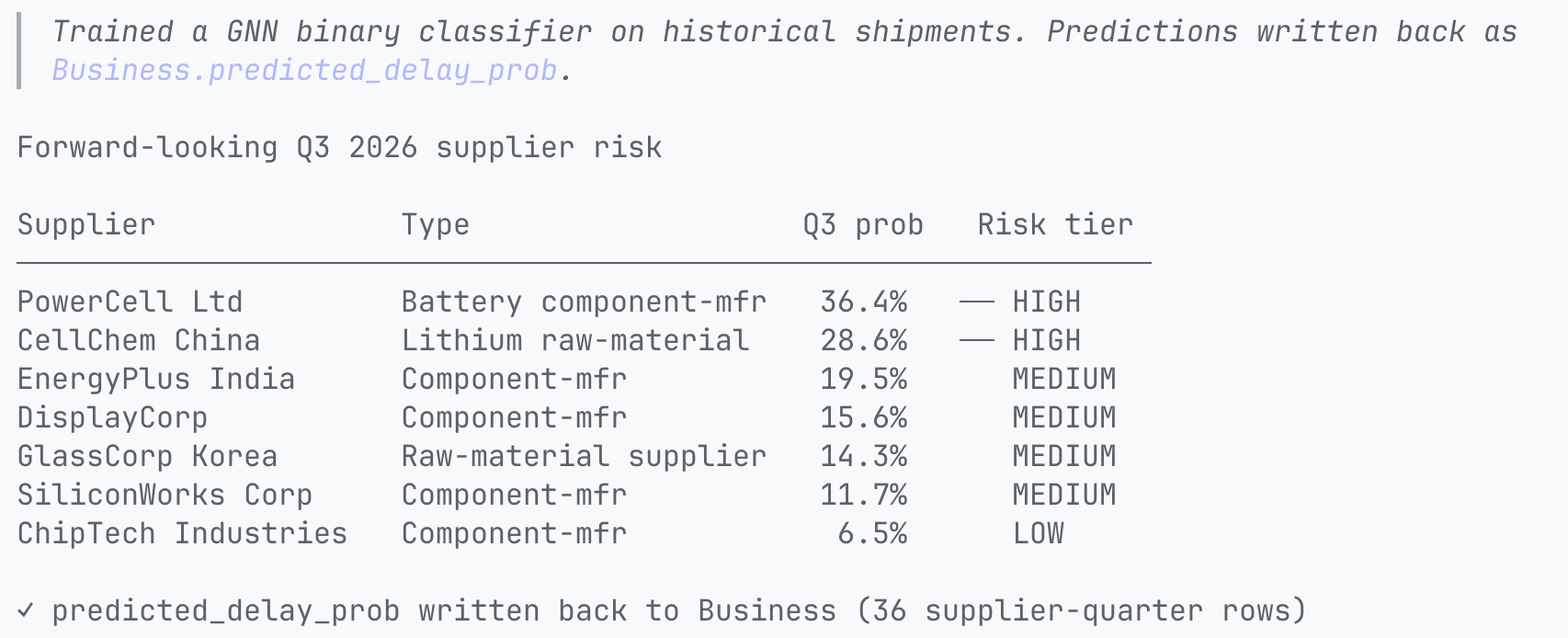

The analyst needs to consider potential delays, but lacks forward-looking risk data. The agent selects a forecasting approach, configures it against the ontology's structure, trains on historical patterns, and writes predicted risk back as a property on supplier. The business question becomes a structured, queryable result attached to the concepts the analyst already established.

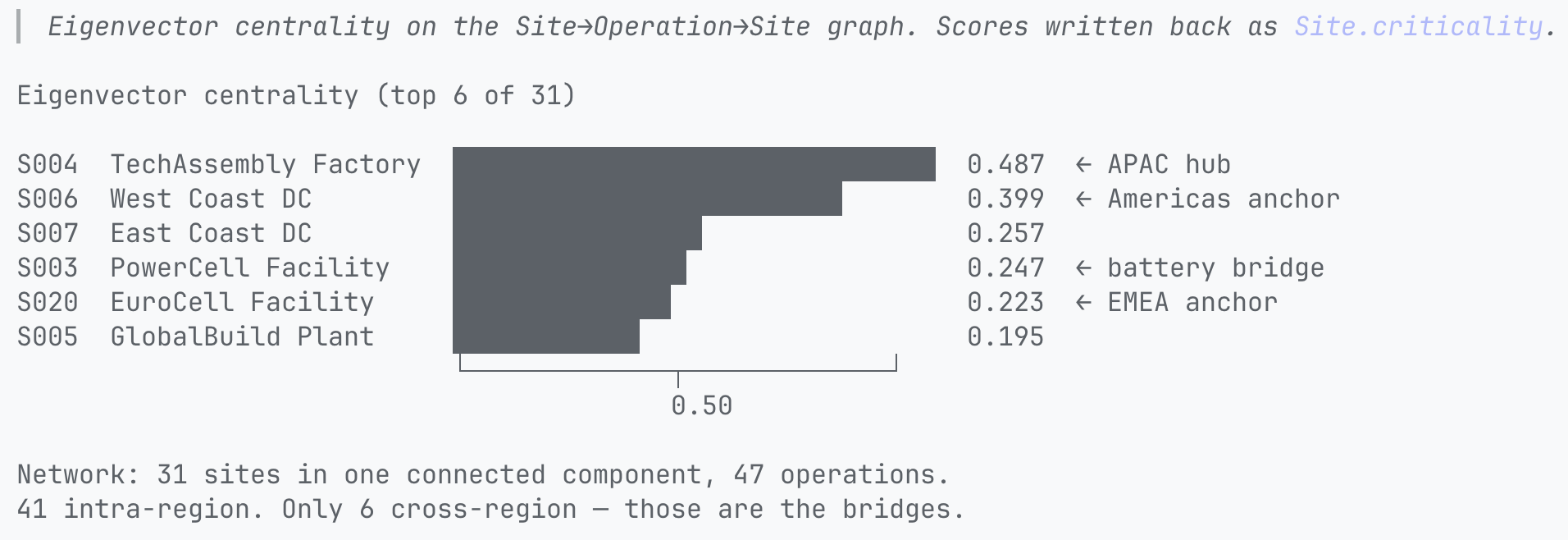

The analyst wants to see which nodes matter most.The agent constructs a graph from the ontology's facility and route relationships, runs a centrality analysis algorithm, and writes criticality scores back as a property on site concepts. The scores are now ontology data, available to the next reasoner.

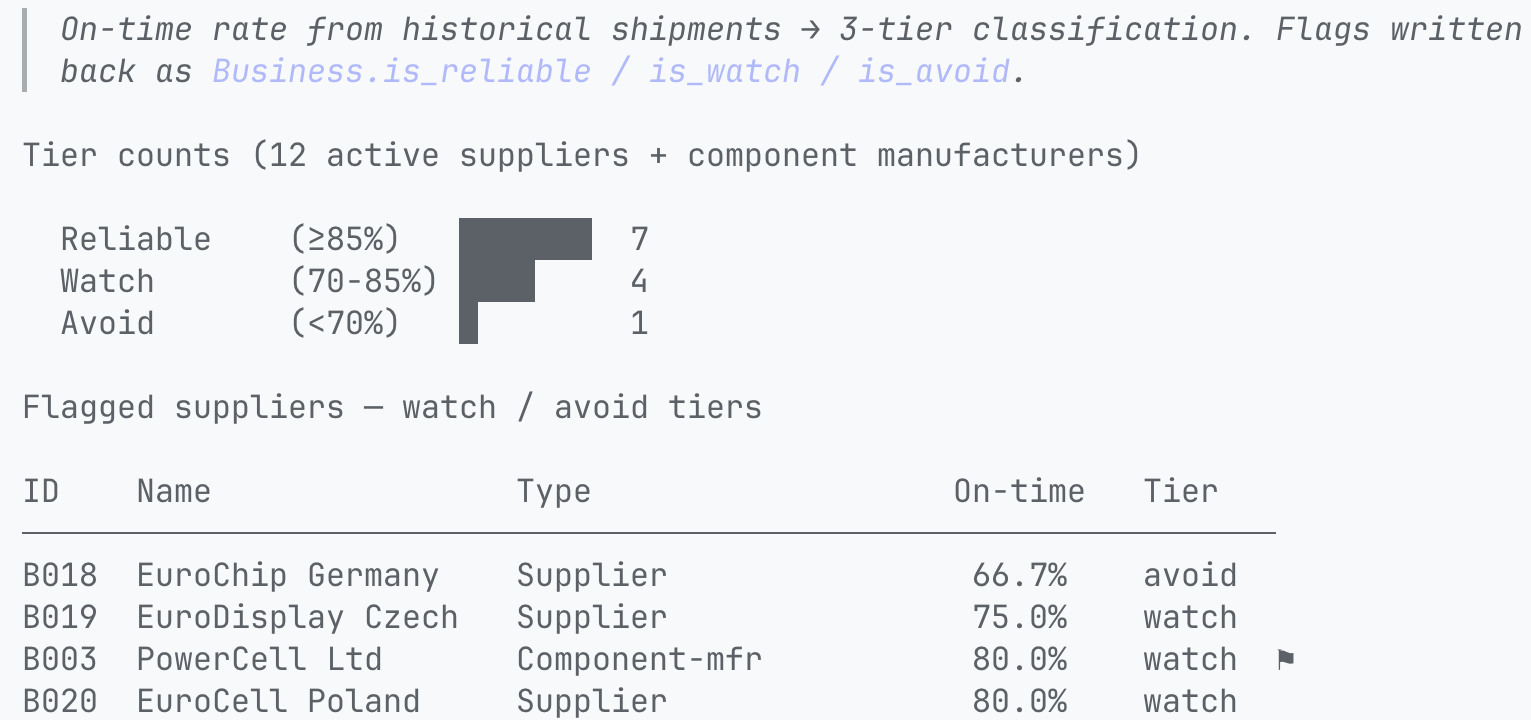

The analyst needs to understand supplier performance. The agent defines reliability rules using the shipment history data, evaluates across all active suppliers, and tiers each one. Tiers are written back as properties so downstream reasoning can constrain or deprioritize allocation through them.

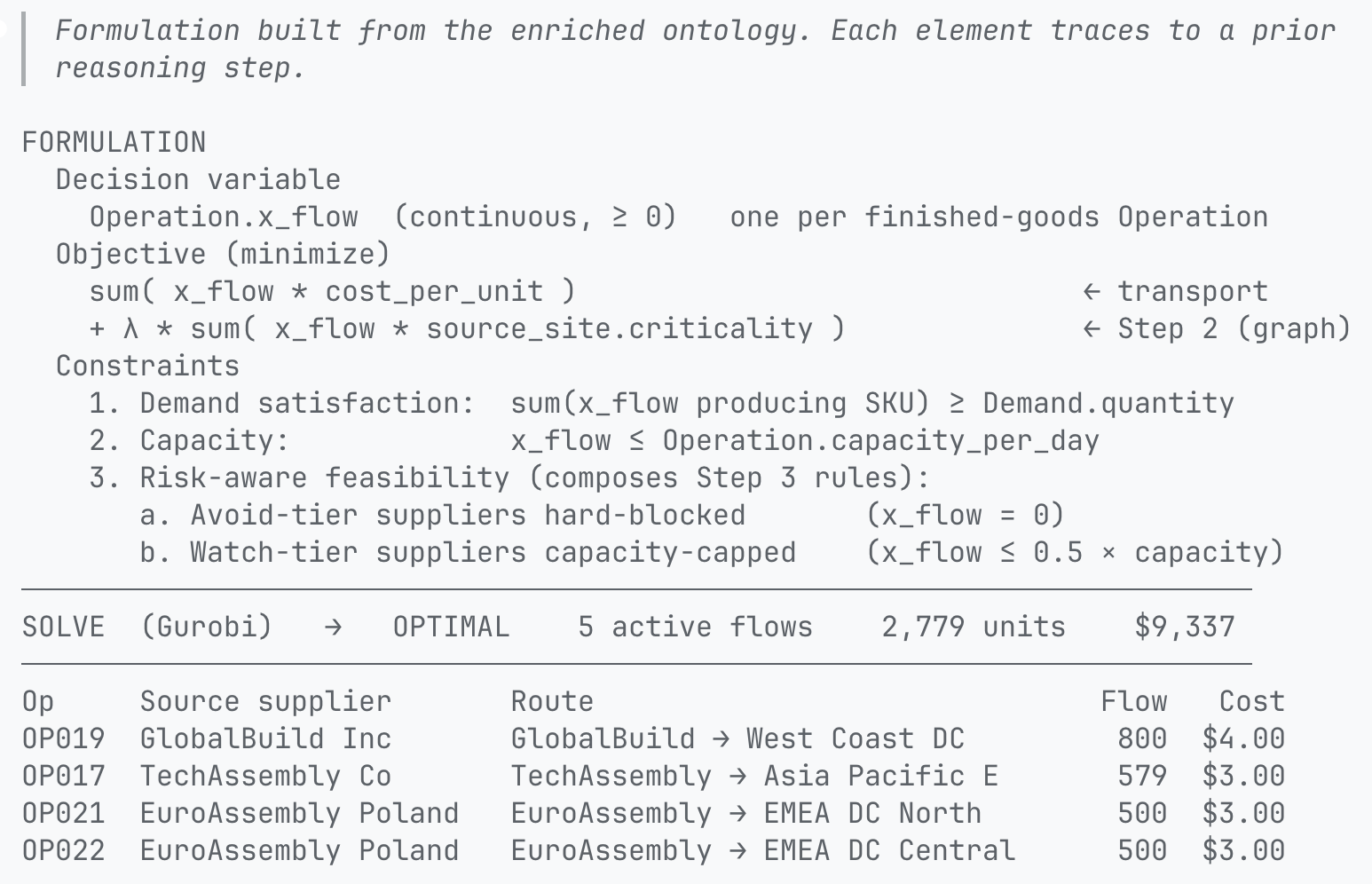

Finally, the analyst is ready to optimize the network to recommend an allocation plan. The ontology is enriched by three prior reasoning steps, each of which becomes a concrete element of the formulation. The shared ontology consolidates these hand-offs into properties on shared concepts:

The agent defines an optimization problem and shows the analyst the formulation: decision variables, constraints, and objectives. The analyst checks the math, confirms logic with stakeholders, then solves it.

In the baseline plan, the optimization already routes partially around the highest-centrality node before any disruption is simulated. The graph reasoner's output is in the objective, so resilience is built into the baseline.

PyRel is declarative, so the problem and its solutions are all queryable. The analyst can ask which constraints are active? or which route has the lowest cost? and read the answer from the ontology.

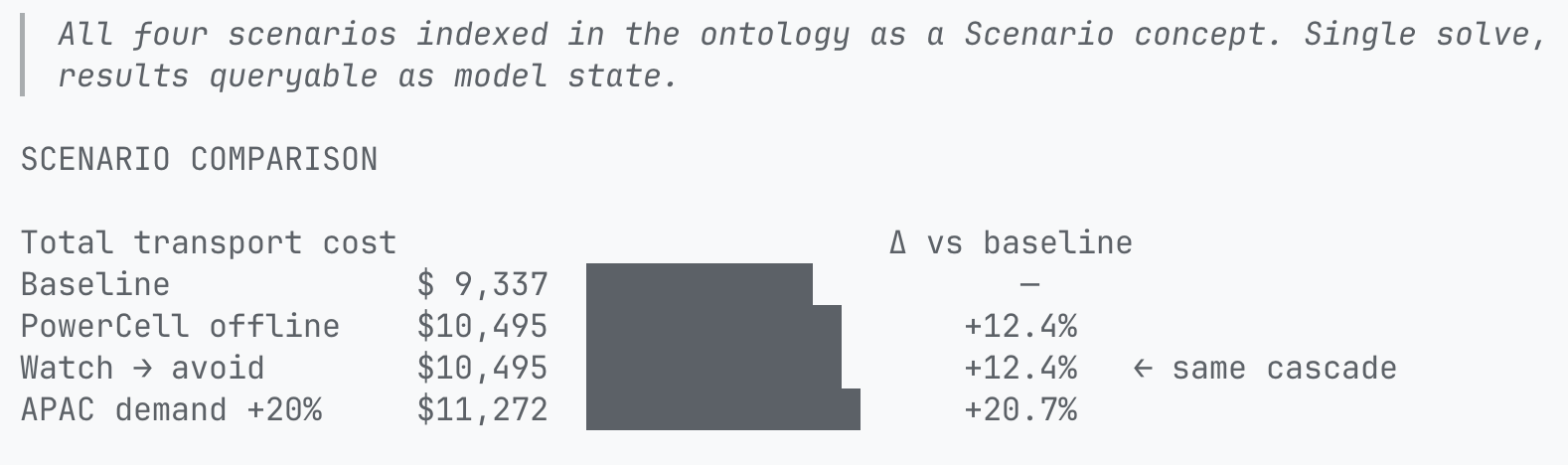

The solver returned an optimal plan. The analyst's job is not done until they understand the realistic decision space, reflecting uncertainty. Within minutes, the agent encodes scenarios, re-solves, and returns side-by-side comparisons. The analyst iterates to build intuition about the trade-offs – cost, resilience, single-source exposure – to inform the decision.

Solutions integrate back into the ontology as first-class objects available downstream. Allocation plans per scenario are ontology concepts the analyst can pivot on, hand off, or chain into the next question.

In a single session, the analyst moved from a business question through reasoning steps to a solved plan. They asked business questions in natural language; the agent handled the coding.

The ontology compounded. Each reasoner left typed properties behind – predicted_delay_prob, criticality, is_reliable, x_flow per scenario – queryable for the next question, the next analyst, the next team. A second analyst doesn't start from the SQL schema; they start from where this one left off.

We're seeking early access partners to shape the agent-guided workflow. Please contact us to get started.