As we discussed in our recent post on the AI value gap, closing the gap requires more than context alone. It requires ontology-grounded reasoning.

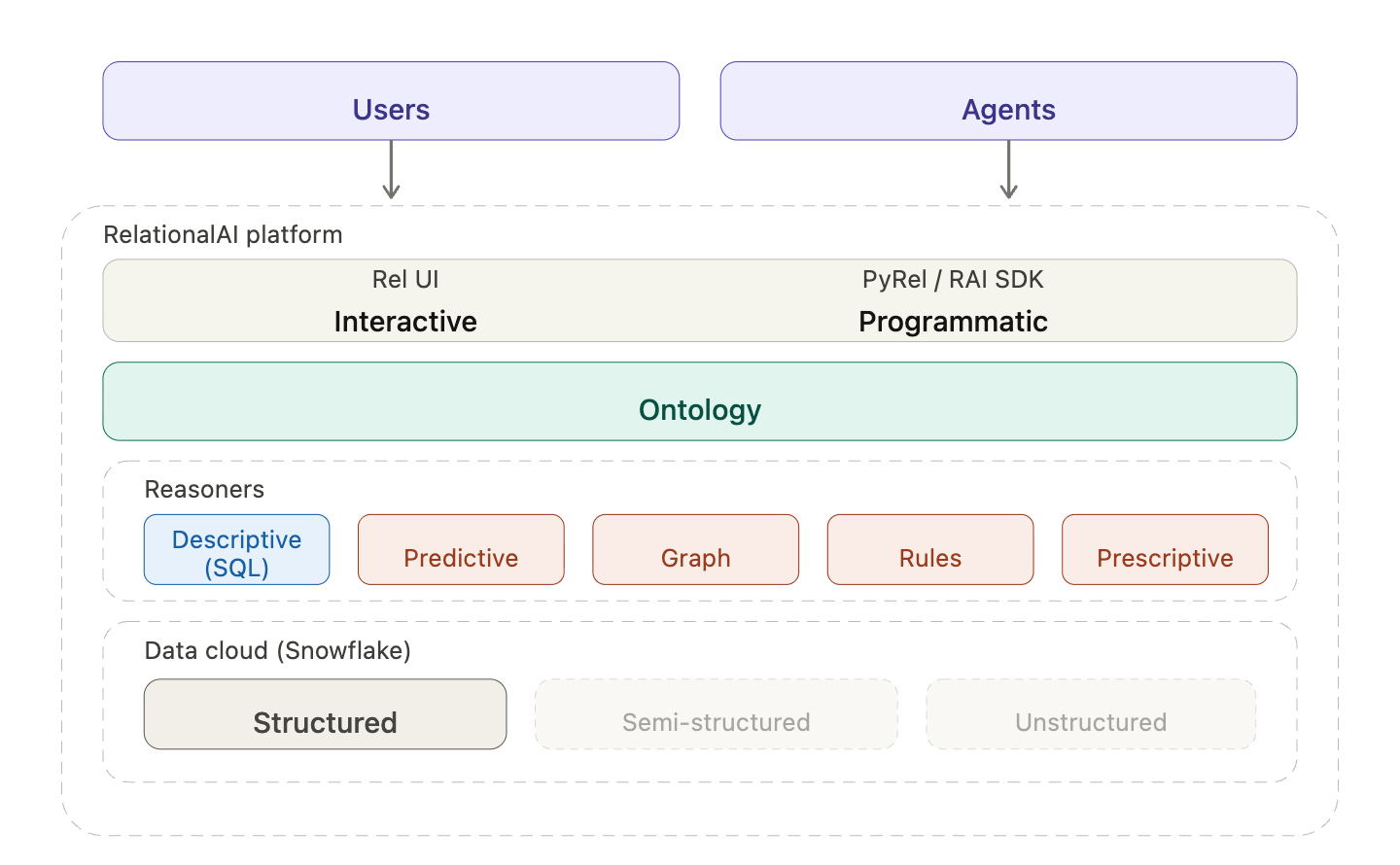

One ontology captures your business. Four composable reasoners – predictive, graph, rules-based, prescriptive – answer the questions SQL can't, all grounded in the same shared model, all running native to your data cloud.

A business analyst today can ask one kind of question well and hit the limits on the next. What were our shipping costs last quarter by distribution center? SQL handles that well.

But the questions that drive complex decisions look different:

If business intelligence is your dashboard – speed, fuel, distance – decision intelligence is the navigation. You need both in the same car.

Most attempts to bring optimization, graph analysis, business rules, or predictive modeling into business decisions stall for the same three reasons.

The disposable formulation. A team spends weeks building a custom model. It runs once. Business conditions change and the formulation is obsolete – locked in a notebook or spreadsheet, assumptions undocumented, drifting from the source data. It becomes a shadow system: one more thing to maintain, reconcile, and eventually retire. Someone asks "can we rerun with updated numbers?" and the answer is "start over."

The expertise bottleneck. Formulation, solver configuration, algorithm selection, result interpretation – each step needs specialized skill. Operations research for optimization. Graph theory for network analysis. Data science for predictions. The people who need the answers – planners, analysts, operations leads – aren't the people who can build the models. And decision-makers need more than a single optimal number; they need to understand trade-offs and robustness under changing assumptions.

The data disconnect. The inputs exist but are scattered across systems. Demand predictions, costs, and capacities are uncertain and interdependent. By the time you've pinned down the right problem to solve, the inputs have already moved.

Every shift in a customer's network — a closed facility, a new lane, a capacity change — used to send us back to the modeling stage. We needed an approach where the formulation lived next to the data and could move with it, not in a notebook on someone's laptop. — Andrea Morgan-Vandome, Chief Innovation Officer, Blue Yonder

That's what we've been building at RelationalAI: a decision intelligence system that extends your data cloud with the reasoning needed to make decisions – optimization, graph analysis, predictions, and business rules – all working together, all grounded in the same shared model.

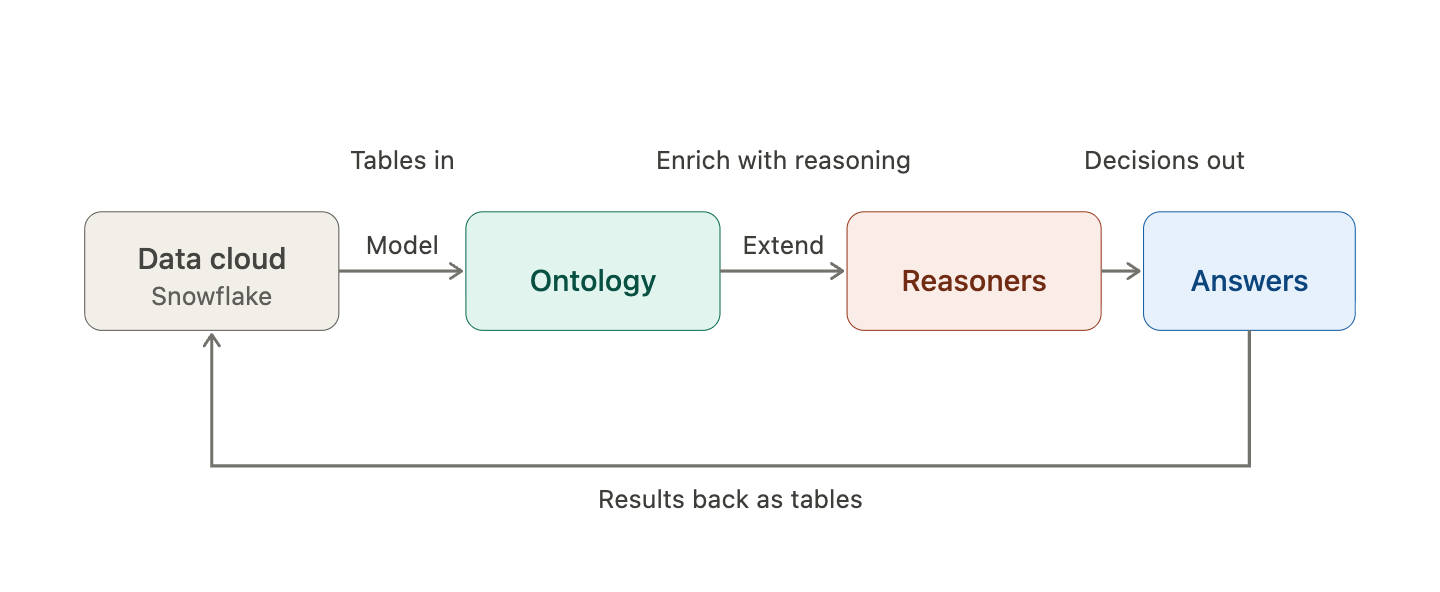

An ontology captures the business. Your schema tells you what data you have; the ontology captures what that data means – the concepts, constraints, and rules that reflect how the business actually operates. One supply chain ontology supports facility location, routing, production planning, and allocation – each as a distinct reasoning problem, each building on what's already been modeled. The ontology persists and evolves, even as individual analyses change.

Four reasoning types answer different kinds of questions on the same model: predict what will happen, analyze how things are connected, evaluate what complies, and optimize what to do. Each one reads from and writes back to the shared ontology, so the output of one becomes a typed input to the next – no custom joins or brittle pipeline logic. The ontology is what makes the hand-off durable. Without it, every pair of reasoners needs a custom translation layer; with it, a forecast property is consumed by the next reasoner like any other input.

All of it runs native to your data cloud, with Snowflake supported currently. Inputs come from your existing tables. Reasoner outputs go back as tables. No data movement, no separate pipeline, no second system to maintain. Results show up in the interfaces your team already uses – Snowflake Intelligence, AI agents, dashboards. That's the difference between reasoning as a co-located capability and reasoning as a bolt-on tool fed by an ETL pipe.

That combination – ontology, reasoners, data-cloud-native – is what makes structured reasoning reusable, accessible, and economical. New questions extend what's already there, formulations compose, and a derived relationship can collapse a thicket of inputs into the small, relevant set needed for the problem at hand.

I like to give them a problem: tell me all of the optimization opportunities, and tell me what is best to increase my revenue. It’s going to reason through all of those things, create the business case, and tell me what I should do… You put it on a common platform, so you get lots of reuse, so you’re not doing one-off things, reinventing the wheel all the time. – Mark Austin, Vice President of Data Science and AI at AT&T for theCube

Back to the allocation question. Answering it takes four distinct reasoning approaches using the same ontology.

Predictive answers what will happen? Start by forecasting regional demand for the next quarter. The predictive reasoner uses graph neural networks (GNNs) grounded in the ontology's structure – historical orders, seasonal patterns, regional trends – and writes those forecasts back as properties on demand-point concepts. The result is a typed, queryable forecast that other reasoners can consume as structured input, not a CSV sitting in a team folder.

Graph answers how is it connected? Centrality algorithms run over the ontology's facility and route relationships to identify which warehouses are critical connectors – the nodes whose failure would disrupt the most routes. Criticality scores attach to warehouse concepts in the ontology, available to any downstream reasoning step. The same reasoner supports community detection, shortest paths, reachability, and other graph algorithms on the same concepts.

Rules-based answers does it comply? Supplier delivery history and compliance thresholds are evaluated against declarative business rules. Suppliers that fail are flagged in the ontology so downstream reasoning can constrain or deprioritize allocation through them. The rules themselves are inspectable and auditable – not code buried in a pipeline, not implicit in someone's query.

Prescriptive answers what should we do? It takes everything upstream – demand forecasts, criticality scores, supplier flags, warehouse capacities – and formulates an optimization problem against the ontology. Decision variables define what's being decided (how much to ship through each route). Constraints enforce feasibility (capacity limits, demand satisfaction, supplier exclusions). An objective encodes the goal (minimize transport cost while weighting network resilience). A solver then searches across all feasible combinations to find the best plan.

Each step enriches the ontology for the next. The output of one reasoner becomes a typed input to another – not via custom joins or brittle pipeline logic, but as properties on shared concepts. That's composability. It's what makes adjacent questions cheap to ask: where should we open new distribution facilities to serve every region at minimum cost? uses different variables on the same concepts, with no pipeline to rebuild. Any reasoner can read from any other through the shared ontology.

The pattern generalizes beyond supply chain network allocation: energy grid planning, financial portfolio optimization, manufacturing maintenance scheduling, and wherever else you have structured data and hard constraints.

The common thread is networked, data-rich systems where decisions require respecting hard constraints or weighing multiple factors. That same substrate is what enterprise agents need to give grounded answers.

Enterprise agents are becoming the default interface to business data. They interpret a request, pull relevant context, and generate a response. What they can't do on their own is guarantee that an answer respects a capacity limit, satisfies a regulatory rule, or traces back to a specific data lineage. Those are structured reasoning problems – and that's where RelationalAI fits in the agentic stack.

Take the allocation problem. An agent can understand what the analyst is asking – allocate shipments to minimize transport costs while meeting demand – and translate it into a formulation against the ontology. But the actual optimization – respecting capacities, honoring constraints, balancing cost against resilience – requires mathematical guarantees that pattern-matching doesn't provide. The allocation either satisfies every constraint or it doesn't. There's no "mostly feasible." You can patch around this with handwritten guardrails and post-hoc validation, or you can ground the agent in a shared reasoning substrate: an ontology that encodes the constraints, relationships, and real-world realities the decision has to respect.

This matters because decision-making is harder to evaluate than code. With software, you write unit tests – it passes or it doesn't. But when a system recommends reordering 17 cases of product for a store next week, you can't easily verify whether the answer should have been 12 or 25. Enterprise agents need to be grounded in explicit constraints and traceable logic, not just pattern-matched from data.

Grounding also makes the agent's reasoning explainable by design. A capacity constraint isn't x_ij ≤ 500 – it's "shipments through Warehouse B can't exceed its weekly capacity of 500 units." Because PyRel – RelationalAI's Python-native language for expressing ontology concepts and reasoning – is declarative, the formulation is queryable: Which constraints are active? What's the capacity limit? Answered directly from the ontology, not by reading code. The reasoning path is auditable end-to-end – which data fed the forecast, which algorithm scored centrality, which rules flagged suppliers, which constraints and objective the solver optimized against.

RelationalAI gives agents that substrate. Agents generate and run PyRel to build, extend, and query ontologies, formulate optimization problems, configure graph algorithms, and define business rules. The ontology keeps every answer grounded. The methodology lives in the stack so the analyst can stay focused on the business question.

Next: how AI agents make ontology-grounded reasoning accessible to data teams to go from a business question to a justified answer – no operations research, graph theory, or data science background required.